Exploring the HTML5 Web Audio: Visualizing Sound

Join the DZone community and get the full member experience.

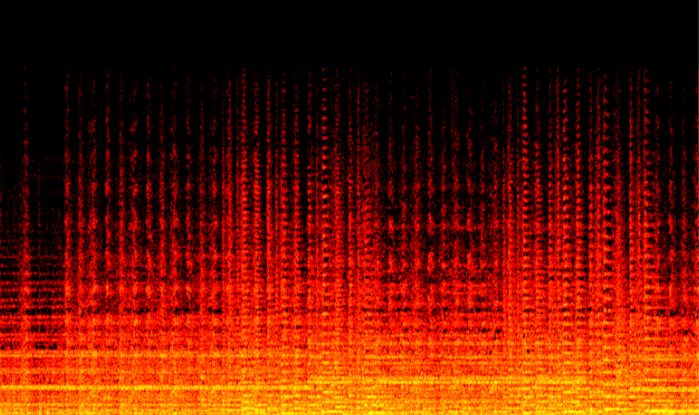

Join For FreeIf you've read some of my other articles on this blog you probably know I'm a fan of HTML5. With HTML5 we get all this interesting functionality, directly in the browser, in a way that, eventually, is standard across browsers. One of the new HTML5 APIs that is slowly moving through the standardization process is the Web Audio API. With this API, currently only supported in Chrome, we get access to all kinds of interesting audio components you can use to create, modify and visualize sounds (such as the following spectrogram).

So why do I start with visualizations? It looks nice, that's one reason, but not the important one. This API provides a number of more complex components, whose behavior is much easier to explain when you can see what happens. With a filter you can instantly see whether some frequencies are filtered, instead of trying to listen to the resulting audio for thse changes.

There are many interesting examples that use this API. The problem is, though, that getting started with this API and with digital signal processing (DSP) usually isn't explained. In this article I'll walk you through a couple of steps that shows how to do the following:

- Create a signal volume meter

- Visualize the frequencies using a spectrum analyzer

- And show a time based spectrogram

We start with the basic setup that we can use as the basis for the components we'll create.

Setting up the basic

If we want to experiment with sound, we need some sound source. We could use the microphone (as we'll do later in this series), but to keep it simple, for now we'll just use an mp3 as our input. To get this working using web audio we have to take the following steps:

- Load the data

- Read it in a buffer node and play the sound

Load the data

With the web audio we can use different types of audio sources. We've got a MediaElementAudioSourceNode that can be used to use the audio provided by a media element. There's also a MediaStreamAudioSourceNode. With this audio source node we can use the microphone as input (see my previous article on sound recognition). Finally there is the AudioBufferSourceNode. With this node we can load the data from an existing audio file (e.g mp3) and use that as input. For this example we'll use this last approach.

// create the audio context (chrome only for now)

var context = new webkitAudioContext();

var audioBuffer;

var sourceNode;

// load the sound

setupAudioNodes();

loadSound("wagner-short.ogg");

function setupAudioNodes() {

// create a buffer source node

sourceNode = context.createBufferSource();

// and connect to destination

sourceNode.connect(context.destination);

}

// load the specified sound

function loadSound(url) {

var request = new XMLHttpRequest();

request.open('GET', url, true);

request.responseType = 'arraybuffer';

// When loaded decode the data

request.onload = function() {

// decode the data

context.decodeAudioData(request.response, function(buffer) {

// when the audio is decoded play the sound

playSound(buffer);

}, onError);

}

request.send();

}

function playSound(buffer) {

sourceNode.buffer = buffer;

sourceNode.noteOn(0);

}

// log if an error occurs

function onError(e) {

console.log(e);

}In this example you can see a couple of functions. The setupAudioNodes function creates a BufferSource audio node and connects it to the destination. The loadSound function shows how you can load an audio file. The buffer which is passed into the playSound function contains decoded audio that can be used by the web audio API.

In this example I use an .ogg file, for a complete overview of the formats supported look at: https://sites.google.com/a/chromium.org/dev/audio-video

Play the sound

To play this audio file, all we have to do is turn the source node on, this is done in the playSound function:

function playSound(buffer) {

sourceNode.buffer = buffer;

sourceNode.noteOn(0);

}You can test this out at the following page:

When you open that page, you'll hear some music. Nothing to spectacular for now, but nevertheless an easy way to load audio that'll use for the rest of this article. The first item on our list was the volume meter.

Create a volume meter

One of the basic scenario's, and often one of the first steps someone new to this API tries to create, is a simple signal volume meter (or an UV meter). I expected this to be a standard component in this API, where I could just read off the signal strength as a property. But, no such node exists. But not to worry, with the components that are available, it's pretty easy (not straightforward, but easy nevertheless) to get an indication of the signal strength of your audio file. Int this section we'll create the following simple volume meter:

As you can see this is a simple volume meter where we measure the signal strength for the left and the right audio channel. This is drawn on the canvas, but you could have also used divs or svg to visualize this. Lets start with a single volume meter, instead of one for each channel. For this we need to do the following:

- Create an analyzer node: With this node we get realtime information about the data that is processed. This data we use to determine the signal strength

- Create a javascript node: We use this node as a timer to update the volume meters with new information

- Connect everything together

Analyser node

With the analyser node we can perform real-time frequency and time domain analysis. From the specification:

a node which is able to provide real-time frequency and time-domain analysis information. The audio stream will be passed un-processed from input to output.

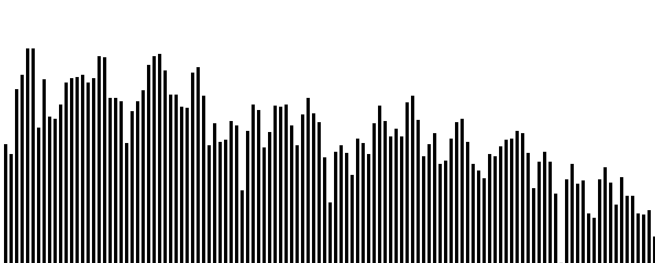

I won't go into the mathematical details behind this node, since there are many articles out there that explain how this works (a good one is the chapter on fourier transformation from here). What you should now about this node is that it splits up the signal in frequency buckets and we get the amplitude (the signal strenght) for each set of frequencies (the bucket). The best way to understand this, is to skip a bit ahead in this article and look at the frequency distribution we'll create later on.

This image plots the result from the analyser node. The frequencies increase from left to right, and the height of the bar shows the strength of that specific frequency bucket. More on this later on in the article. For now we don't want to see the strength of the separate frequency buckets, but the strength of the total signal. For this we'll just add all the strenghts from each bucket and divide it by the number of buckets.

First we need to create an analyzer node

// setup a analyzer

analyser = context.createAnalyser();

analyser.smoothingTimeConstant = 0.3;

analyser.fftSize = 1024;This creates an analyzer node whose result will be used to create the volume meter. We use a smoothingTimeConstant to make the meter less jittery. With this variable we use input from a longer time period to calculate the amplitudes, this results in a more smooth meter. The fftSize determine how many buckets we get containing frequency information. If we have a fftSize of 1024 we get 512 buckets (more info on this in the book on DPS and fourier transformations).

When this node receives a stream of data, it analyzes this stream and provides us with information about the frequencies in that signal and their strengths. We now need a timer to update the meter at regular intervals. We could use the standard javascript setInterval function, but since we're looking at the Web Audio API lets use one of its nodes. The JavaScriptNode.

The javascript node

With the javascriptnode we can process the raw audio data directly from javascript. We can use this to write our own analyzers or complex components. We're not going to do that, though. When creating the javascript node, you can specify the interval at which it is called. We'll use that feature to update the meter at regulat intervals.

Creating a javascript node is very easy.

// setup a javascript node

javascriptNode = context.createJavaScriptNode(2048, 1, 1);This will create a javascriptnode that is called whenever the 2048 frames have been sampled. Since our data is sampled at 44.1k, this function will be called approximately 21 times a second. Now what happens when this function is called:

// when the javascript node is called

// we use information from the analyzer node

// to draw the volume

javascriptNode.onaudioprocess = function() {

// get the average, bincount is fftsize / 2

var array = new Uint8Array(analyser.frequencyBinCount);

analyser.getByteFrequencyData(array);

var average = getAverageVolume(array)

// clear the current state

ctx.clearRect(0, 0, 60, 130);

// set the fill style

ctx.fillStyle=gradient;

// create the meters

ctx.fillRect(0,130-average,25,130);

}

function getAverageVolume(array) {

var values = 0;

var average;

var length = array.length;

// get all the frequency amplitudes

for (var i = 0; i < length; i++) {

values += array[i];

}

average = values / length;

return average;

}In these two functions we calculate the average and draw the meter directly on the canvas (using a gradient so we have nice colors). Now all we have to do is connect the output from the audiosource to the analyser, the analyser to the javasource node (and if we want audio to hear, we also need to connect something to the destionation).

Connect everything together

Connecting everything together is easy:

function setupAudioNodes() {

// setup a javascript node

javascriptNode = context.createJavaScriptNode(2048, 1, 1);

// connect to destination, else it isn't called

javascriptNode.connect(context.destination);

// setup a analyzer

analyser = context.createAnalyser();

analyser.smoothingTimeConstant = 0.3;

analyser.fftSize = 1024;

// create a buffer source node

sourceNode = context.createBufferSource();

// connect the source to the analyser

sourceNode.connect(analyser);

// we use the javascript node to draw at a specific interval.

analyser.connect(javascriptNode);

// and connect to destination, if you want audio

sourceNode.connect(context.destination);

}And that's it. This will draw a single volume meter, for the complete signal. Now what do we do when we want to have a volume meter for each channel. For this we use a ChannelSplitter. Let's dive right into the code to connect everything:

function setupAudioNodes() {

// setup a javascript node

javascriptNode = context.createJavaScriptNode(2048, 1, 1);

// connect to destination, else it isn't called

javascriptNode.connect(context.destination);

// setup a analyzer

analyser = context.createAnalyser();

analyser.smoothingTimeConstant = 0.3;

analyser.fftSize = 1024;

analyser2 = context.createAnalyser();

analyser2.smoothingTimeConstant = 0.0;

analyser2.fftSize = 1024;

// create a buffer source node

sourceNode = context.createBufferSource();

splitter = context.createChannelSplitter();

// connect the source to the analyser and the splitter

sourceNode.connect(splitter);

// connect one of the outputs from the splitter to

// the analyser

splitter.connect(analyser,0,0);

splitter.connect(analyser2,1,0);

// we use the javascript node to draw at a

// specific interval.

analyser.connect(javascriptNode);

// and connect to destination

sourceNode.connect(context.destination);

}As you can see we don't really change much. We introduce a new node, the splitter node. This node splits the sound into a left and a right channel. These channels can be processed separately. With this layout the following happens:

- The audiosource creates a signal based on the buffered audio.

- This signal is sent to the splitter, who splits the signal into a left and right stream.

- Each of these two streams is processed by their own realtime analyser.

- From the javascript node, we now get the information from both analysers and plot both meters

I've shown step 1 through 3, let's quickly move on the step 4. For this we simply add the following to the onaudioprocess node:

javascriptNode.onaudioprocess = function() {

// get the average for the first channel

var array = new Uint8Array(analyser.frequencyBinCount);

analyser.getByteFrequencyData(array);

var average = getAverageVolume(array);

// get the average for the second channel

var array2 = new Uint8Array(analyser2.frequencyBinCount);

analyser2.getByteFrequencyData(array2);

var average2 = getAverageVolume(array2);

// clear the current state

ctx.clearRect(0, 0, 60, 130);

// set the fill style

ctx.fillStyle=gradient;

// create the meters

ctx.fillRect(0,130-average,25,130);

ctx.fillRect(30,130-average2,25,130);

}And now we've got two signal meters, one for each channel.

Or view the result on youtube:

Now lets see how we can get the view of the frequencies I showed earlier.

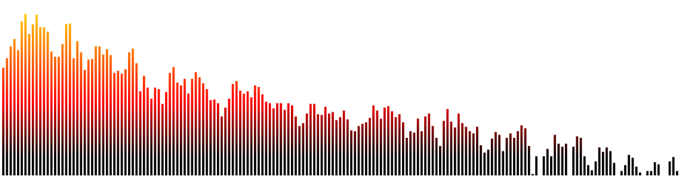

Create a frequency spectrum

With all the work we already did in the previous section, creating a frequency spectrum overview is now very easy. We're going to aim for this:

We set up the nodes just like we did in the first example:

function setupAudioNodes() {

// setup a javascript node

javascriptNode = context.createJavaScriptNode(2048, 1, 1);

// connect to destination, else it isn't called

javascriptNode.connect(context.destination);

// setup a analyzer

analyser = context.createAnalyser();

analyser.smoothingTimeConstant = 0.3;

analyser.fftSize = 512;

// create a buffer source node

sourceNode = context.createBufferSource();

sourceNode.connect(analyser);

analyser.connect(javascriptNode);

// sourceNode.connect(context.destination);

}So this time we don't split the channels and we set the fftSize to 512. This means we get 256 bars that represent our frequency. We now just need to alter the onaudioprocess method and the gradient we use:

var gradient = ctx.createLinearGradient(0,0,0,300);

gradient.addColorStop(1,'#000000');

gradient.addColorStop(0.75,'#ff0000');

gradient.addColorStop(0.25,'#ffff00');

gradient.addColorStop(0,'#ffffff');

// when the javascript node is called

// we use information from the analyzer node

// to draw the volume

javascriptNode.onaudioprocess = function() {

// get the average for the first channel

var array = new Uint8Array(analyser.frequencyBinCount);

analyser.getByteFrequencyData(array);

// clear the current state

ctx.clearRect(0, 0, 1000, 325);

// set the fill style

ctx.fillStyle=gradient;

drawSpectrum(array);

}

function drawSpectrum(array) {

for ( var i = 0; i < (array.length); i++ ){

var value = array[i];

ctx.fillRect(i*5,325-value,3,325);

}

};In the drawSpectrum function we iterate over the array, and draw a vertical bar based on the value. That's it. For a live example, click on the following link:

Or view it on youtube:

And then the final one. The spectrogram.

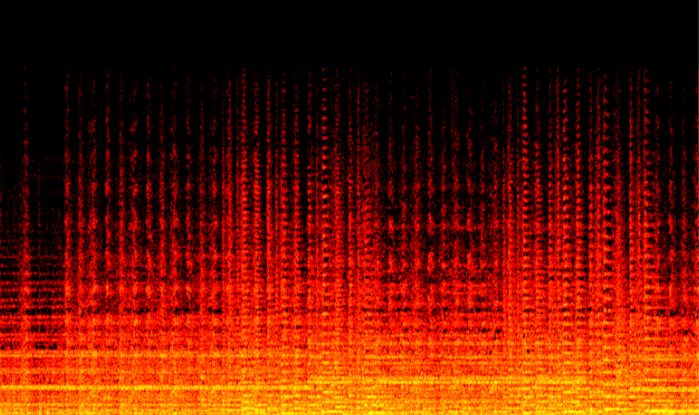

Time based spectrogram

When you run the previous demo you see the strength of the various frequency buckets in real time. While this is a nice visualization, it doesn't allow you to analyze information over a period of time. If you want to do that you can create a spectrogram.

With a spectrogram we plot a single line for each measurement. The y-axis represents the frequency, the x-asis the time and the color of a pixel the strength of that frequency. It can be used to analyze the received audio, and also creates nice looking images.

The good thing, is that to output this data we don't have to change much from what we've already got in place. The only function that'll change is the onaudioprocess node and we'll create a slightly different analyser.

analyser = context.createAnalyser();

analyser.smoothingTimeConstant = 0;

analyser.fftSize = 1024;The enalyser we create here has an fftSize of 1024, this means we get 512 frequency buckets with strengths. So we can draw a spectrogram that has a height of 512 pixels. Also note that the smoothingTimeConstant is set to 0. This means we don't use any of the previous results in the analysis. We want to show the real information, not provide a smooth volume meter or frequency spectrum analysis.

The easiest way to draw a spectrogram is by just start drawing the line at the left, and for each new set of frequencies increase the x-coordinate by one. The problem is that this will quickly fill up our canvas, and we'll only be able to see the first half a minute of the audio. To fix this, we need some creative canvas copying. The complete code for drawing the spectrogram is shown here:

// create a temp canvas we use for copying and scrolling

var tempCanvas = document.createElement("canvas"),

tempCtx = tempCanvas.getContext("2d");

tempCanvas.width=800;

tempCanvas.height=512;

// used for color distribution

var hot = new chroma.ColorScale({

colors:['#000000', '#ff0000', '#ffff00', '#ffffff'],

positions:[0, .25, .75, 1],

mode:'rgb',

limits:[0, 300]

});

...

// when the javascript node is called

// we use information from the analyzer node

// to draw the volume

javascriptNode.onaudioprocess = function () {

// get the average for the first channel

var array = new Uint8Array(analyser.frequencyBinCount);

analyser.getByteFrequencyData(array);

// draw the spectrogram

if (sourceNode.playbackState == sourceNode.PLAYING_STATE) {

drawSpectrogram(array);

}

}

function drawSpectrogram(array) {

// copy the current canvas onto the temp canvas

var canvas = document.getElementById("canvas");

tempCtx.drawImage(canvas, 0, 0, 800, 512);

// iterate over the elements from the array

for (var i = 0; i < array.length; i++) {

// draw each pixel with the specific color

var value = array[i];

ctx.fillStyle = hot.getColor(value).hex();

// draw the line at the right side of the canvas

ctx.fillRect(800 - 1, 512 - i, 1, 1);

}

// set translate on the canvas

ctx.translate(-1, 0);

// draw the copied image

ctx.drawImage(tempCanvas, 0, 0, 800, 512, 0, 0, 800, 512);

// reset the transformation matrix

ctx.setTransform(1, 0, 0, 1, 0, 0);

}To draw the spectrogram we do the following:

- We copy what is currently drawn to a hidden canvas

- Next we draw a line of the current values at the far right of the canvas

- We set the translate on the canvas to -1

- We copy the copied information back to the original canvas (that is now drawn 1 pixel to the left)

- And reset the transformation matrix

See a running example here:

Or view it here:

Last thing I'd like to mention regarding the code is the chroma.js library I used for the colors. If you ever need to draw something color or gradient related (e.g maps, strengths, levels) you can easily create color scales with this library.

Two final pointers, I know I'll get questions about:

- Volume could be represented as a magnitude, just didn't want to complicate matters for this.

- The spectogram doesn't use logarithmic scales. Once again, didn't want to complicate things

Published at DZone with permission of Jos Dirksen, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments